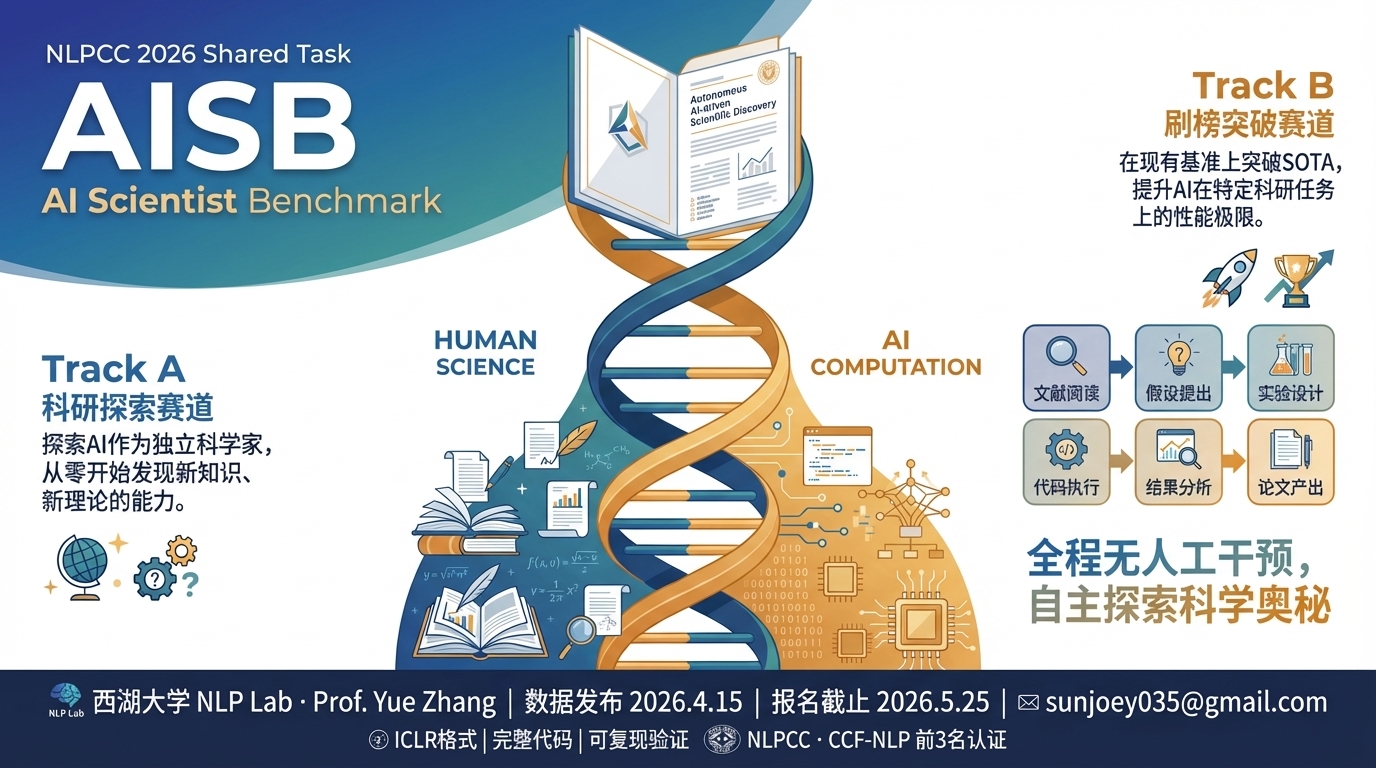

AISB 2026: AI Scientist Benchmark

Current Competition: NLPCC 2026 Shared Task

评测AI自主科研能力:发现问题 + 实验验证 + 成果表达

Overview / 概述

AISB (AI Scientist Benchmark) is a platform for evaluating AI systems as researchers — the complete cycle of Idea + Experiment + Report. Given a research topic and reference papers, AI systems must discover scientific problems, form hypotheses, validate through experiments, and communicate findings in a paper humans can understand.

The current NLPCC 2026 public release is one AISB-hosted competition and exposes three runnable directions: T1 Agentic Coding & Research Engineering, T2 Formal Mathematical Proof, and T3 LifeSci/ADMET Scientific Discovery. Integrity verification (CAS) ensures all claimed results are real, not fabricated.

The current public package is a local self-service release: benchmark materials, evaluation tools, submission validation, and local backend replay are included in the repository.

AISB(AI科学家基准测试)是评测 AI 科研能力的平台—— 完整的Idea + Experiment + Report循环。 给定研究主题和参考论文,AI系统须发现科学问题、形成假设、通过实验验证、并将结论写成人类可理解的论文。

当前 NLPCC 2026 公开版本是 AISB 目前置顶的一场比赛,提供 3 个可运行方向: T1 智能体代码与科研工程、T2 形式化数学证明、T3 生命科学/ADMET 科学发现。 CAS(声明准确度分数)确保所有声称的结果真实可追溯。

当前公开包是本地自助版本:benchmark 材料、评测工具、submission 校验和本地 backend replay 都在仓库中提供。

Tracks / 赛道

Paper Track / 论文赛道

The AI system is given a research topic, reference papers, and a benchmark, and must autonomously conduct a full research cycle: discover scientific problems, form hypotheses, design experiments as validation, analyze results, and write an ICLR-format paper that communicates findings clearly.

AI系统被给定研究主题、参考论文和基准,须自主完成完整科研循环:发现科学问题、形成假设、设计实验验证、分析结果、撰写ICLR格式论文,将结论清晰表达。

S_paper = 30% significance + 25% originality + 25% methodology + 20% writing

Reviewers inspect paper + claims + experiment logs + replay evidence

CAS below threshold: desk reject

Benchmark Track / 基准赛道

The AI system is given a benchmark with known baselines and must develop a new method that improves measurable performance. Experiments are the primary evidence, but the system must explain why the method works. Benchmark leaderboard rows are separate from paper leaderboard rows.

AI系统被给定已有SOTA基准线,须提出新方法超越当前最优。实验和跑分作为重要依据,但须解释方法为什么有效——可解释的科学提升,而非盲目优化。

S_benchmark = official evaluator score

S_paper = reviewer paper score

CAS below threshold: desk reject

Agentic Coding & Research Engineering / 智能体代码与科研工程

Scientific question: can an agent improve code-oriented research systems through real execution and engineering iteration?

benchmark + papersFormal Mathematical Proof / 形式化数学证明

Scientific question: can an agent produce Lean-verified proof research rather than informal math claims?

benchmark + papersLifeSci/ADMET Scientific Discovery / 生命科学/ADMET科学发现

Scientific question: can an agent improve life-science modeling with real experiments and evidence-backed explanations?

benchmark + papersEvaluation Architecture / 评测架构

↓

Think & Explore (思考探索、形成假设)

↓

Hypothesis → Experiment → Validation (假设-实验-验证)

↓

Communicate Findings (将结论讲出来,让人理解)

两个榜单共同评估 AI 科研能力,但分别排名:Paper Track 看论文与科研论证,Benchmark Track 看可执行 benchmark 结果。

Important Dates / 重要日期

How to Participate / 参赛方式

One-Line Prompt / 一句话 Prompt

If your AI Scientist can inspect GitHub repositories and execute code, give it this instruction directly together with the current NLPCC public package.

Use current NLPCC public package: https://github.com/ResearAI/NLPCC-2026-Task9-AISB/tree/main/benchmarks/nlpcc. Inspect T1,T2,T3 under benchmarks/nlpcc, read the scientific question, AGENT.md, bench.yaml, data/data.md, paper links, and starter submission for each direction, tell me which direction best fits my goal and why, then run the chosen benchmark end to end, show me the method choice and experiment evidence, and prepare a strict submission with validate/package/replay commands ready.

The current public release is a local self-service benchmark kit. Public portal upload is not yet part of this release; the recommended workflow is repository clone, local evaluation, strict submission validation, and optional local backend replay.

当前公开版本是本地自助 benchmark 工具包。线上提交门户暂未纳入本次公开发布;推荐流程是 clone 仓库、本地评测、strict submission 校验,以及可选的本地 backend replay。

Autonomy Modes / 参与模式

The no-human rule applies only to the fully autonomous mode. Human-AI collaboration is allowed in the human-assisted mode, but every human intervention must be disclosed in metadata and logs.

“人类不能参与”只适用于完全自动化赛道。人机协同赛道允许人类参与,但必须在 metadata 和日志中披露。

Prepare Workspace / 准备工作空间

Clone the repository and prepare a writable benchmark workspace with python scripts/agent_tools.py workspace init <track>.

克隆仓库,并用 python scripts/agent_tools.py workspace init <track> 准备可写 benchmark 工作空间。

Read Materials / 阅读材料

Read AGENT.md, bench.yaml, data card, references, baseline notes, and track-specific instructions inside the prepared workspace.

阅读准备好的工作空间中的 AGENT.md、bench.yaml、data card、references、baseline notes 和赛道说明。

Run Locally / 本地运行

Let your AI Scientist run experiments, use the local evaluators, and write a strict submission directory.

让你的 AI Scientist 执行实验、调用本地 evaluator,并写出 strict submission 目录。

Validate & Package / 校验并打包

Run scripts/agent_tools.py submission validate / package, and optionally replay the package locally before release submission opens.

运行 scripts/agent_tools.py submission validate / package,并在正式开放收集前可选地本地 replay。

Submission Requirements / 提交要求

Format / 格式

- Strict `submission/` layout

- `paper/paper.pdf` + `paper/source/main.tex` + `paper/source/refs.bib`

- `logs/iterations.jsonl`, `logs/experiment_log.jsonl`, `logs/api_calls.jsonl`

- `metadata.json` + `results.json` + `paper/claims.json`

Integrity / 诚信

- CAS (Claim Accuracy Score) must be above 0.5

- All numbers must trace to experiment logs

- Organizer-style replay is supported through `submission/code/run.py`

- No hidden-answer access or canary leakage

Materials / 材料

- GitHub repo contains benchmark packages and starter submissions

- NLPCC directions expose benchmark links and paper links directly

- `scripts/agent_tools.py` supports evaluate, validate, package, and replay

- Paper and benchmark boards remain separate

Scoring FAQ / 评分 FAQ

Contact / 联系方式

西湖大学 NLP Lab · Prof. Yue Zhang

Email: sunjoey035@gmail.com

NLPCC · CCF-NLP · Top-3 Teams Receive CCF-NLP Certification